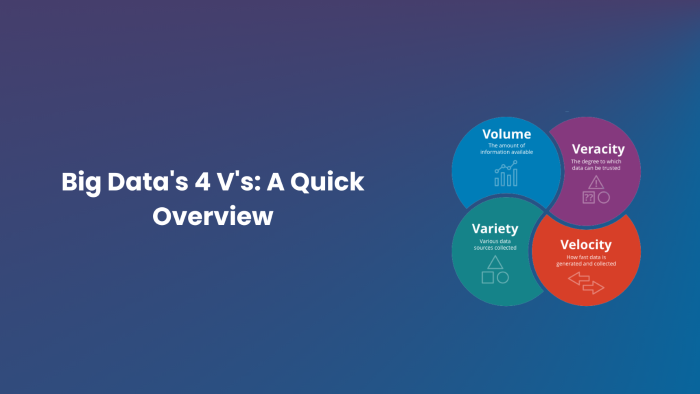

Big Data’s 4 V’s: A Quick Overview

The term “big data” seems to pop up everywhere these days. Big data is used by many companies, organisations, and industries to gain insights, enhance decision-making, and stay ahead of the competition. The term “big data” refers to more than just the sheer amount of information stored, however, it also encompasses the “4 V’s of Big Data.” This post will present the 4 V’s (volume, velocity, variety, and veracity) and discuss why it is important to understand them, especially for those considering enrolling in Big Data and Analytics Training.

Table of contents

- The Value of Analytics and Big Data Education

- Volume

- Velocity

- Variety

- Veracity

- Beyond the Big Four: Extra Traits

- Conclusion

The Value of Analytics and Big Data Education

Before discussing the 4 V’s of big data, let’s emphasise the importance of big data and analytics training.

- Big data and analytics training helps people evaluate massive databases and develop conclusions.

- Big data analysis and interpretation are in demand. Such a course might lead to data analysis, data science, and business intelligence careers.

- Training enables employees to make better decisions, fuel enterprise development, and tackle difficult issues in a data-driven society.

- Companies who know how to analyse and use big data successfully have a leg up on the competition. The success of businesses in today’s data-driven environment is directly tied to the contributions of trained experts.

Let’s dive into the 4 Vs of big data:

Volume

The term “volume” is used to describe the massive amounts of information that are created and stored by businesses. Customer interactions, sensors, social media, and other channels may all contribute to this data. Organisations have amassed petabytes or even exabytes of data as the amount of information has increased dramatically in recent years.

Large data sets need specialised tools and methods for management and analysis. Experts in big data must master the effective management of huge datasets in storage, processing, and analysis.

Velocity

Data velocity is the pace at which new information is created, amassed, and analysed. Data is being produced at a previously unheard-of rate because of the proliferation of real-time data sources like IoT devices and social media. Organisations that must act swiftly in response to shifting situations and make instantaneous judgements must prioritise speed.

Experts in big data and analytics should be well-versed in streaming analytics and data intake techniques to analyse data as it arrives.

Variety

The term “variety” refers to the wide range of information that businesses process. Structured data may be found in places like databases, semi-structured data in places like XML and JSON, and unstructured data in places like text files, photos, and videos. Data from many sources, such as company databases, social media, and sensor data, also contribute to this variety.

Experts in big data must be able to convert between various file formats and combine information gathered from various sources. This often requires preparing and transforming data in some way.

Veracity

The veracity of information refers to how reliable it is. Unfortunately, not all large data is spotless and precise. Inconsistencies, mistakes, missing numbers, and unpredictability in the data all contribute to problems with data integrity. Maintaining high-quality data is crucial for accurate reporting and sound decision-making.

In large data initiatives, data quality is of paramount importance. Professionals need expertise in data cleaning, validation, and quality assurance.

Beyond the Big Four: Extra Traits

Some experts have extended this concept beyond the 4 Vs, which gives a core knowledge of big data. These qualities include:

- Only if it can be turned into actionable insights and results for organisations will big data be considered worthwhile. Experts need to pay close attention to the ways in which they might get useful insights from data.

- The reliability and applicability of data serve as measures of validity. Verifying the accuracy of collected data is essential for avoiding making poor inferences and choices.

- Volatility is the rate of change over time in a set of data. The stock market is an example of constantly shifting data, whereas historical records are more consistent. When developing analytics solutions, professionals should consider data’s ephemeral nature.

Conclusion

Data analytics and big data experts can’t succeed without a firm grasp of the four Vs of big data: volume, velocity, variety, and veracity. Companies are always looking for skilled workers who can handle the challenges of big data, do thorough analyses, and draw meaningful conclusions. Training programmes in big data and analytics provide participants with the information and abilities necessary to succeed in today’s data-driven world. The future of organisations and industries will be largely shaped by those who can make sense of the ever-increasing amounts of data.